Building Training Data for Physical AI: From Motion Capture to Robot Learning

How to design and capture high-quality motion data for humanoid robots, manipulation tasks, and sim-to-real transfer pipelines.

By Tbrain Team

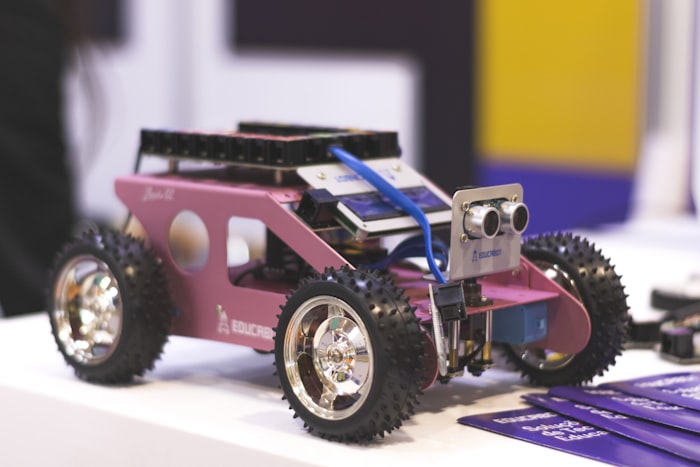

The Physical AI Data Challenge

Training robots to move like humans requires something that synthetic data alone cannot provide: ground-truth human motion captured in real-world environments.

While simulation has made enormous progress, the sim-to-real gap remains the central challenge in Physical AI. Models trained purely in simulation fail when confronted with the messiness of the real world.

Why Real-World Data Matters

Simulated environments, no matter how sophisticated, miss the complexity of the real world:

- Contact dynamics — friction, deformation, and surface variation that physics engines approximate but never fully capture

- Environmental diversity — lighting changes, clutter, unexpected obstacles

- Human motion nuance — the subtle adjustments humans make unconsciously when picking up a glass or opening a door

- Task variation — the thousand different ways to fold a towel

Data Modalities for Robot Training

A comprehensive robotics dataset typically includes multiple synchronized modalities:

Visual Data

- Egocentric RGB video — what the robot "sees" from its perspective

- Multi-view stereo video — for 3D reconstruction

- Depth maps — LiDAR or structured light for spatial understanding

Motion Data

- Optical motion capture (MOCAP) — gold-standard skeletal tracking

- 3D hand pose — 21+ joint positions tracked in real-time

- Full-body skeletal tracking — for locomotion and coordination

- IMU data — inertial measurements for balance and orientation

Interaction Data

- Force/torque sensing — for manipulation tasks

- Object 6DoF pose — tracking every object the robot interacts with

- Gripper state — open/close, force applied

- Task annotations — start/end, success/failure, key events

Accuracy Requirements by Task

Not all tasks need the same precision:

| Task Type | Accuracy Needed | Typical Capture Method |

|---|---|---|

| Locomotion | 5–10mm | Depth sensors, IMU |

| General manipulation | 2–5mm | Depth + MOCAP |

| Fine manipulation | < 1mm | Optical MOCAP |

| Teleoperation | Joint-level | Direct sensor readings |

Capture Pipeline Best Practices

1. Environment Design

Capture in environments that match deployment conditions. Kitchen data should come from real kitchens — not lab mockups with perfect lighting.

2. Task Diversity

A single task performed 1,000 times is less valuable than 100 different tasks performed 10 times each. Diversity in initial conditions, object arrangements, and execution styles matters enormously for generalization.

3. Validation Protocol

Every capture session should include a calibration sequence. Accuracy must be validated against known reference poses before scaling to production.

4. Annotation Standards

Raw motion data needs structured annotations: task boundaries, success/failure labels, key event timestamps, and object state changes.

The Scale Challenge

Academic datasets typically contain 100–1,000 hours. Production robot training increasingly demands 10,000+ hours. Building capture infrastructure at this scale while maintaining quality is the defining engineering challenge of Physical AI.

Conclusion

The teams that solve Physical AI will be the ones that solve the data problem. Lab-grade capture precision, real-world diversity, and production-scale pipelines — this is what separates research demos from robots that actually work in homes and factories.